Publications & Competitions

Academic publications and Kaggle competition solutions with open source code. Click-through to check out the work in more detail.

Publications

2026

"Model-Agnostic Online Certificate-Driven Calibration for Time Series Forecasting Under Distribution Shift"

Under Review

Time series out-of-distribution generalization requires forecasters to remain reliable when deployment dynamics differ from training conditions due to covariate shift, concept shift, and temporal dependence. Probably Approximately Correct Bayesian domain adaptation provides computable certificates by decomposing target risk into a source risk term, a source-to-target mismatch term, and a complexity term, but standard analyses rely on independent sampling and distributional stability, assumptions that are violated in time series by serial dependence and nonstationary shift. We propose a model-agnostic online martingale Probably Approximately Correct Bayesian framework that yields finite-sample certificates under temporal dependence and distribution shift. The certificate replaces independent-sample concentration with martingale concentration that adapts to loss scale and predictable variation. We use the certificate as a surrogate regularizer for online calibration by training a gated residual Bayesian head on top of a fixed forecasting backbone, producing a corrective update that reverts to the backbone prediction when the gate is closed. Online calibration combines a source risk anchor, a posterior-shift penalty, and a time-adaptive mismatch term computed from target windows observed before forecasting. It follows a predict-then-update protocol in which outcomes become available only after forecasting and are used to update subsequent predictions. Experiments across convolutional, attention-based, and large language model-based forecasters show improved stability and accuracy under covariate and concept shift.

"PAC-Bayesian Meta-Learning for Few-Shot Identification of Linear Dynamical Systems"

Under Review

Identifying linear time-invariant (LTI) dynamical systems from data is especially challenging when trajectories are short, noisy, or high-dimensional. Traditional system identification methods typically treat each system in isolation and therefore discard shared information that may exist across related systems. We propose a PAC-Bayesian Meta-Learning framework for LTI system identification (PBML-LTI) that explicitly leverages cross-task structure while preserving task-level heterogeneity. Each task corresponds to an unknown LTI system, and a meta-learner uses a collection of training trajectories to learn a data-dependent prior over system parameters. Given a new system with limited trajectory data, the method performs Bayesian inference to produce a posterior distribution over the new system's parameters, enabling calibrated uncertainty quantification and principled adaptation in the few-shot regime.

A key technical challenge is temporal dependence: trajectories generated by LTI systems violate i.i.d. assumptions underlying standard learning theory. To address this, we develop generalization guarantees for meta-learned priors under sequential dependence using martingale-based PAC-Bayes analysis with sub-normalized concentration tools. The resulting bounds characterize how the quality of the learned prior controls expected identification error on unseen systems, with explicit dependence on trajectory length, noise, and the divergence between task posteriors and the meta-prior. This connects uncertainty-aware meta-identification with finite-sample theory for dependent dynamical data.

A key technical challenge is temporal dependence: trajectories generated by LTI systems violate i.i.d. assumptions underlying standard learning theory. To address this, we develop generalization guarantees for meta-learned priors under sequential dependence using martingale-based PAC-Bayes analysis with sub-normalized concentration tools. The resulting bounds characterize how the quality of the learned prior controls expected identification error on unseen systems, with explicit dependence on trajectory length, noise, and the divergence between task posteriors and the meta-prior. This connects uncertainty-aware meta-identification with finite-sample theory for dependent dynamical data.

"Confidence-Based Handover for Causal Bayesian Optimization with Adaptive Expert Trust"

Under Review

Causal Bayesian Optimization (CBO) has emerged as a powerful paradigm for decision-making under uncertainty, effectively leveraging causal structures to guide interventions. However, existing CBO methods do not adequately integrate domain-specific expertise, potentially leading to inefficient exploration and suboptimal outcomes in real-world applications involving complex human decision-making and economic considerations. To bridge this gap, we propose Expert-guided Causal Bayesian Optimization (ECBO), a framework that integrates human expert knowledge into the CBO process. We introduce an expert weighting mechanism that adaptively modulates the Expected Improvement (EI) acquisition function, reflecting expert endorsements or exclusions of candidate solutions. By embedding these expert-derived preferences, our model proactively balances exploration and exploitation. Additionally, we implement a robust harm-free mechanism to guard against potentially detrimental expert interventions, alongside an adaptive trust parameter that dynamically adjusts expert influence based on observed discrepancies. Our experimental results underscore the practical value of effectively incorporating expert knowledge in complex causal environments.

"A Causal Perspective on Jump-Diffusion for Time-Series Anomaly Detection"

Under Review

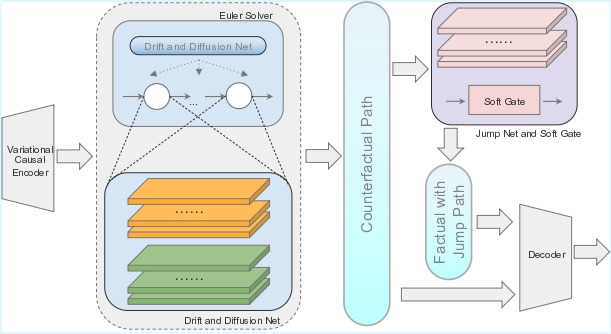

Time series anomaly detection is essential for maintaining robustness in dynamic real-world systems. However, most existing methods rely on static distribution assumptions, while overlooking the latent causal structures and structural shifts that underlie real-world temporal dynamics. This often leads to poor explanation of anomalies and misclassification of environment-induced variations. To address these shortcomings, we propose Causal Soft Jump Diffusion Anomaly Detection (CSJD-AD), a novel framework that models both latent dynamics and soft-gated expected jumps through a structural jump diffusion process. We adopt a causal perspective grounded in environment-conditioned invariance by inferring discrete environment states and conditioning the jump-augmented process on them, yielding a practical detector for unlabeled sensor streams without aiming to identify true interventions. By generating paired counterfactual and factual trajectories, the model explicitly contrasts causally consistent behavior with unexplained deviations. Our method achieves state-of-the-art performance across benchmark datasets, demonstrating the importance of incorporating causal reasoning and jump-aware dynamics into time series anomaly detection.

2025

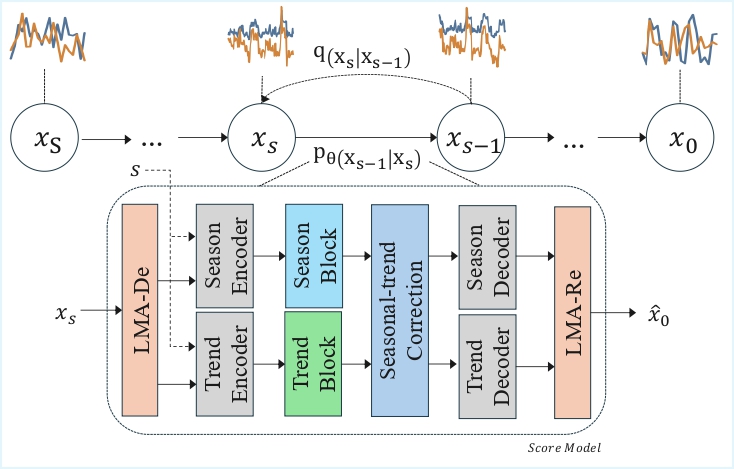

We introduce STDiffusion, a generative framework for time series that explicitly

decomposes each sequence into trend, seasonal and stochastic components. A

lightweight decomposition module produces component-wise representations; dedicated

component learners capture long-term trend and periodicity, while a diffusion

backbone models residual innovations conditioned on those components. This design

yields faithful, structure-aware samples and enables controllable generation and

augmentation for downstream forecasting tasks. The figure on the left shows the

overall architecture.

Competitions

Data Mining: Child Mind Institute Detect Behavior with Sensor Data

...

Developed a top 1% silver-medal solution for the Kaggle CMI-DBSD competition by designing a multimodal time-series model with CNN+SE, physics-inspired IMU features, and subject-level Hungarian assignment

Data Mining: Child Mind Institute Problematic Internet Use

...

Developed a top 3% silver-medal solution for the Kaggle CMI-PIU competition by integrating tabular and time-series features,

training tree-based regressors with cross-validation, and optimizing thresholds to maximize Quadratic Weighted Kappa.

Large Language Model: MAP - Charting Student Math Misunderstandings

...

Developed a top 5% silver-medal solution for the Kaggle MAP competition by training LLM models with LoRA, using family prefix filtering and cross-model consistency weighted ensemble to improve accuracy.